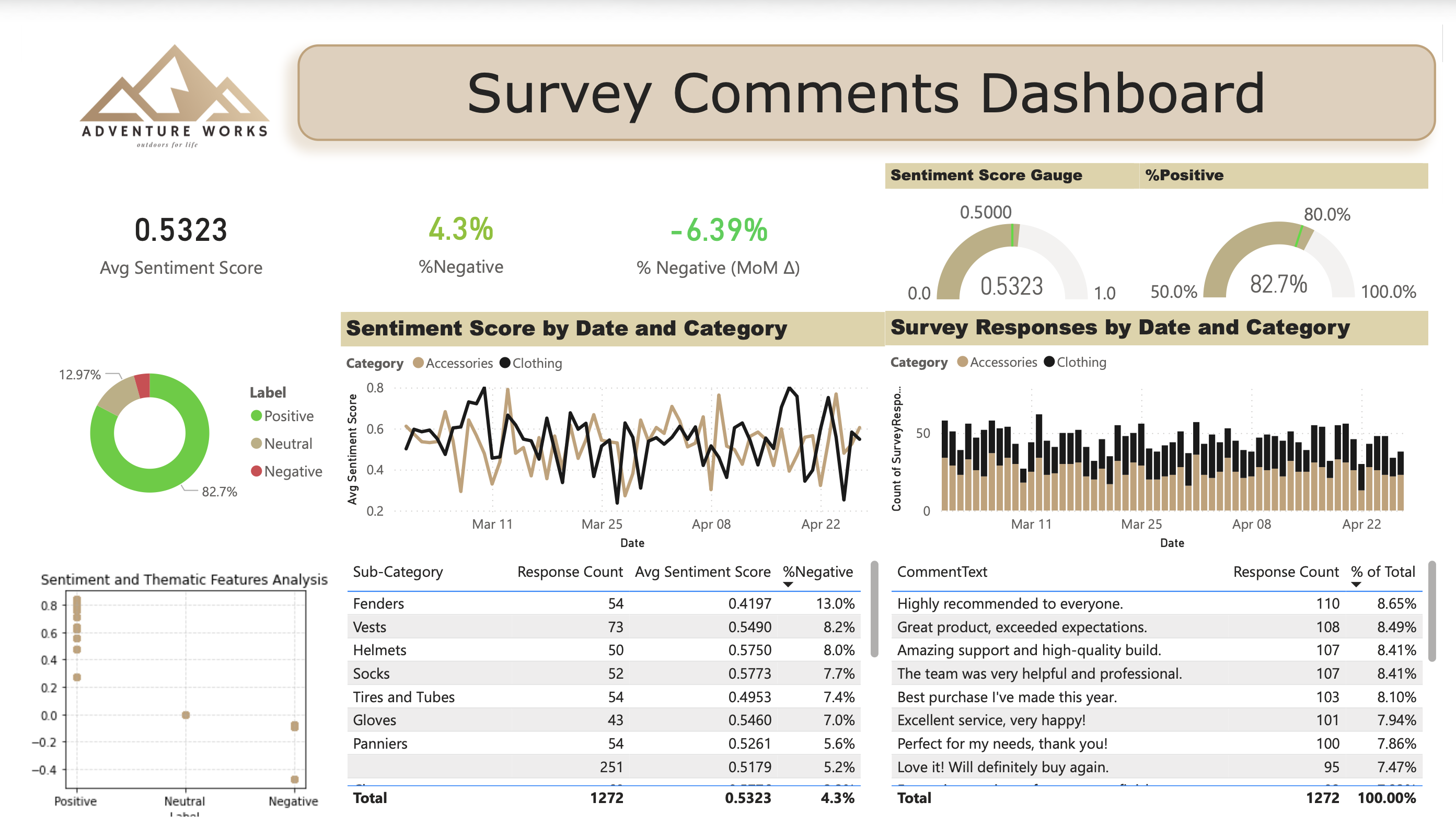

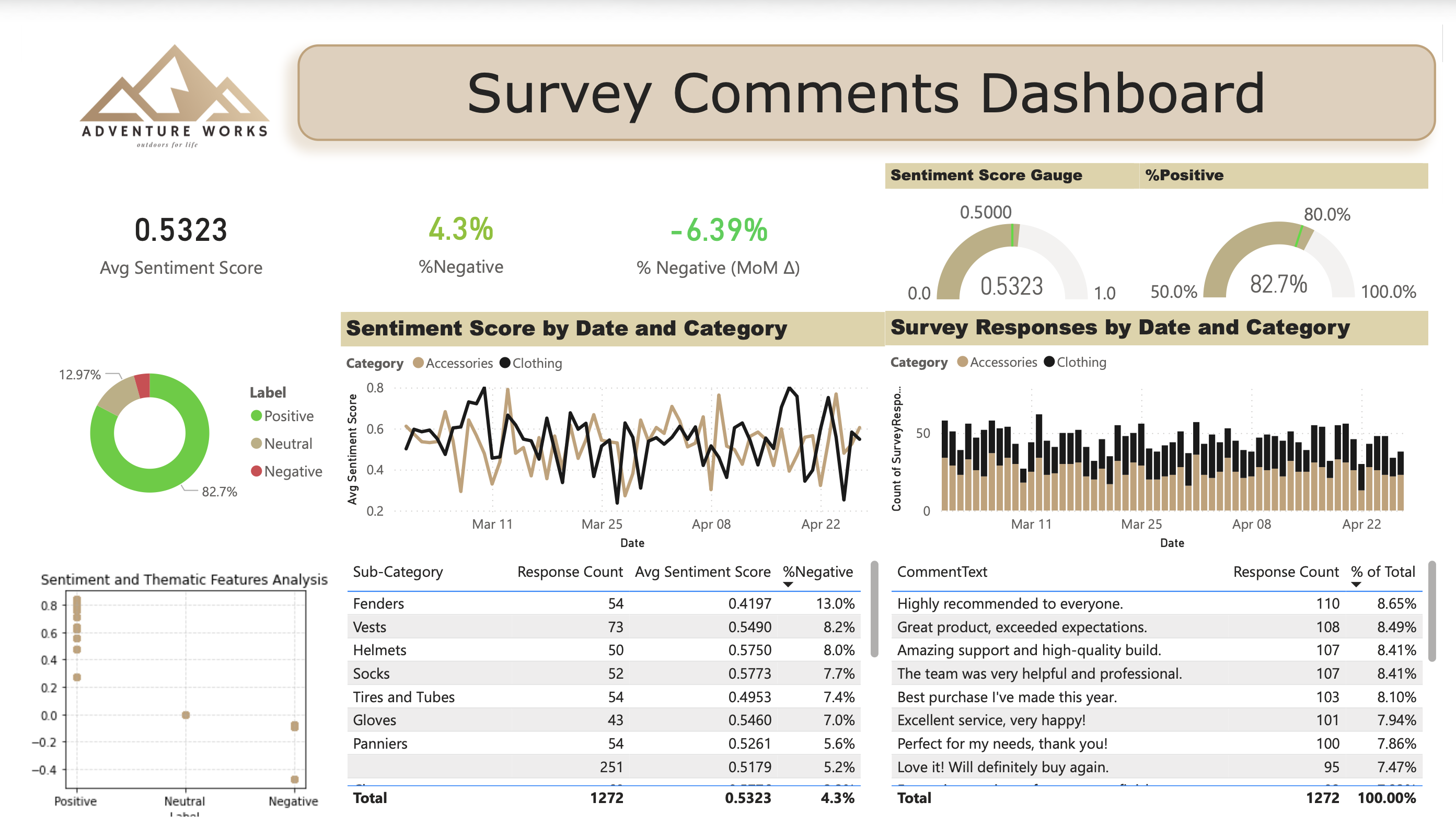

Organizations frequently collect large volumes of qualitative feedback through surveys, customer comments, and operational notes, yet much of this information remains underutilized because it cannot be easily integrated into structured analytics workflows. In this project, I developed a Python-based natural language processing pipeline using libraries such as NLTK, spaCy, and scikit-learn to transform unstructured text data into structured analytical features. The workflow extracts sentiment signals and thematic patterns from raw qualitative responses, enabling the data to be aggregated, quantified, and aligned with operational performance metrics.

By converting subjective feedback into measurable indicators, the analysis allows qualitative customer experience signals to be incorporated into KPI reporting and decision-support dashboards, providing leadership with a more complete view of operational performance.

While quantitative metrics such as conversion rates, operational throughput, and service KPIs provide important signals about system performance, they often fail to explain why performance is changing. Many organizations attempt to address this gap by collecting open-ended survey responses and customer feedback; however, qualitative text data is inherently difficult to analyze at scale.

Manual review processes are slow, subjective, and impractical when datasets grow to hundreds or thousands of responses. As a result, valuable context about customer experience and operational issues often remains buried within unstructured comments, disconnected from the quantitative performance metrics used in executive reporting.

This creates a critical visibility gap where organizations can detect performance changes but struggle to understand the underlying drivers behind them.

The objective of this project was to develop a reproducible workflow that converts qualitative customer feedback into structured sentiment metrics. Using Python and natural language processing techniques, the goal was to process raw text responses, classify sentiment, and produce an analytical dataset that could be integrated into reporting tools. This approach allows qualitative feedback to be measured, aggregated, and visualized alongside traditional performance metrics.

comment_idcomment_textlabel if supervisedsentiment_scoresentiment_labelthemes / topic_idtop_termsTransforming qualitative feedback into quantitative sentiment scores allows organizations to move beyond static survey summaries and manual comment review toward a scalable, continuously updated peak inside the customer experience. When integrated into analytics workflows and reporting dashboards, sentiment trends can be monitored alongside operational KPIs, providing an early indication of emerging service issues or shifts in customer perception that may not yet appear in traditional performance metrics.

By identifying these patterns earlier, organizations can investigate root causes and address service gaps before dissatisfaction spreads through reviews, social media, or word-of-mouth—turning open-ended survey comments into a practical tool for protecting brand reputation and improving service outcomes.

Oops! Something went wrong while submitting the form